The totally equalized state will require hundreds of years to play out regardless of the radiative forcing source.

That's why the 100 years for 70% equalization is given instead of several thousand years for 99,99999% equalization.

Lots of ice to melt and sea water at depth to be exposed to the surface. A radiative forcing takes place at the radiative boundary between Earth and space...typically given as the tropopause.

No, the forcing is present in all layers of the atmosphere. It's at the TOA we measure the imbalance. It's at the lower troposphere that affects surface air temperatures.

The tropopause is the point between the troposphere and the stratosphere, which is highly irregular, and primarily changes altitude with latitude..

That's OK. I have forgotten alot since 1974 as well.

It's simply a shift away from a balance between incoming and outgoing radiative energy measured in watts per square meter, radiation being the only means of energy exchange possible.

Well, there is some conduction going on between atmospheric molecules exchanging heat as well.

Again, the downward infrared radiation emanating from the atmosphere does not warm the surface.

Actually, it does to a small extent.

The Sun warms the surface, some of which is absorbed directly to some depth below the water surface.

The ocean waters are actually very opaque to IR and very transparent to visible light and UV. That is why the IR forcing is limited to the first few microns of the water and why more than half the solar energy reaching the surface continues to travel in some cases, hundreds of meters before being fully absorbed. By 1,000 meters, nearly all light is absorbed, but not completely until you get to about 4,000 meters. Then, there is no visible light from the surface. Energy fluxes are deep enough, they take additional time to equalize to any given percentage.

When melting ice, the phase transition from solid to liquid results in no temperature change.

Yes, I think most here discussing this issue do understand the enthalpy of fusion. Of course there are a few who don't.

The energy radiated by the surface, mostly in the infrared, is proportional to the 4th power of the temperature difference. As the temperature of the surface rises so will the total energy the surface radiates away. It's a balance, an equilibrium. CO2 and other greenhouse gases merely slow the loss of energy to space. Additional greenhouse gases upset the radiative balance. Changes in solar radiance upset the balance. The watt is a measure of power and is independent of it's source. I see no reason why the lag should differ between solar and greenhouse gas forcing.

The lag is different because at the solid surface, the forcing to heat a solid, to re-emission of upward IR is often in the milliseconds. Surface water from IR warming is only marginally longer. However, water heated by shortwave changes take a comparatively rather long time for the convection processes to take the imbalance back to the surface.

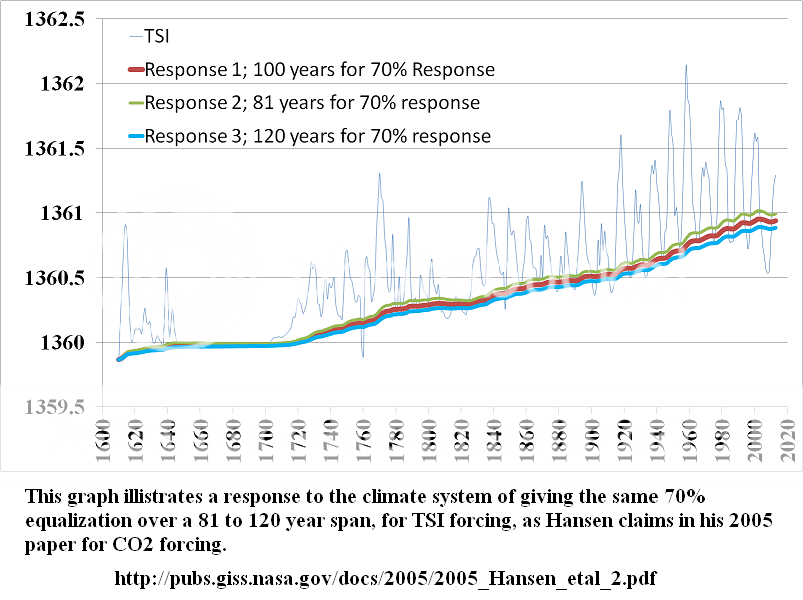

Does this mean that warming is still occurring due to increasing TSI from 50 years back and earlier? I suspect that is the point you wish to make.

Hard to say. Most of the numbers and variable changes I have used place the solar peak from 1958 having the lag peaking at 2004, give or take a few years, but this is with solar forcing alone with all other variables stable. Of course, the real world doesn't work that way. If we consider the extra aerosols in the air from about 1940 increasing to 1980, then decreasing again for another two decades with emission regulations around the world, the solar forcing is modulated to the point that the graph is meaningless as is. The peak could easily be as far about as 2060, just from solar changes. However, if we continue to see the sun quieting in the next cycle or two, I suspect we will see the peak much sooner.